All you need to know to start your app using LLM and RAG

From where to start if you are creating your SaaS based on LLMs

Without opening never-ending discussion on intelligence, we cannot avoid to say that written language is very relevant for people. It allows communication for different types of information.

Companies spend their time training a model and then publish it at a time point. This means that novel information after the model has been trained will not be present. Moreover, information is often given in unstructured formats like emails, slides, and custom documents, which are not available to those companies when they train the model.

In summary, while GPT’s or other large language models’ (LLMs) knowledge is broad, assuring it possesses knowledge specific to one specific organization or area of expertise is a critical challenge to solve. Fine-tuning and Retrieval Augmented Generation (RAG) are tools used to address this.

Fine-Tuning

refers to training an existing language model with additional data to optimise it for a specific task.

Now the hard truth: Even if pre-trained Language Models can be fine-tuned to adapt the model to a particular task; this does not actually enable you to include your own domain knowledge into the model. This is because the model has previously undergone extensive training on a vast quantity of general language data, and your particular domain data is typically insufficient to supersede the model’s prior learnings.

As a result, even though the model can be fine-tuned to deliver accurate answers occasionally, it will frequently fail because of how much it depends on the pre-training data.

Retrieval-Augmented Generation (RAG)

By concentrating on the prompt itself and adding pertinent context, we employ context injection without changing the LLM.

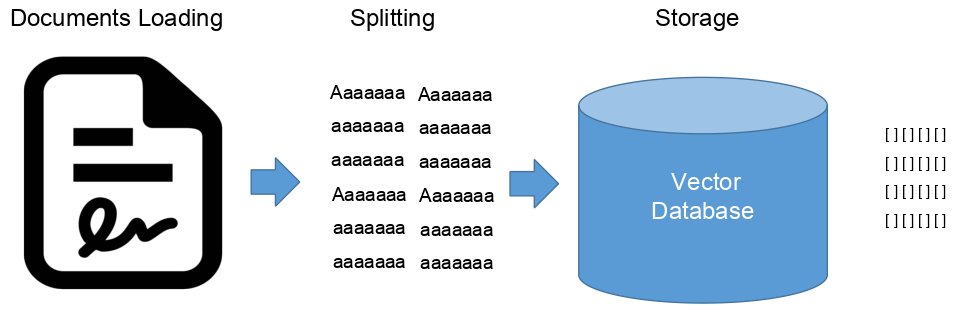

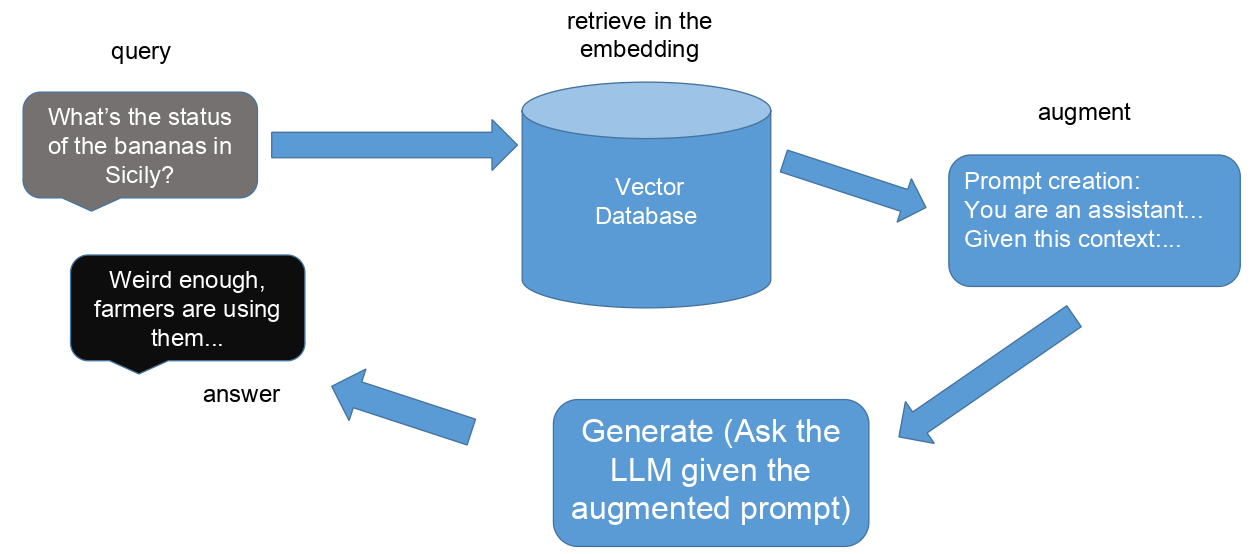

Thus, we must consider how to give the prompt accurate information. The schematic representation of the entire system is shown in the image below. We require a procedure that can determine which data is most pertinent. We must allow our computer to compare text fragments with one another in order to accomplish this. This is also called sometimes in-context learning or context injection.

In practice, this is performed with the “Embedding” technique, where text can be represented in a multidimensional embedding space by converting it into vectors using embeddings. In many cases, points that are closer to one another in space are used interchangeably. We index our vectors and store them in a vector database to speed up the similarity search.

The idea has been proposed in a paper Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks [1].

Which Model?

To perform this form of prompt-engineering there are several tools as LangChain, LLamaindex, Haysack.

Moreover, the idea is not dependent on a specific LLM, but some might more “available” to be augmented. This is important because depending what we intend to do with the augementation of the LLM might not be legal.

First of all, not all LLMs are open source

OpenAI’s GPT-3.5 and GPT-4: Despite the name “OpenAI” are closed source, though augmentation is still possible.

Gemini from Google AI is not open source.

Meta’s LLaMA models is open source.

Anthropic’s Claude is not open source.

Mistral is open source

Furthermore, only Mistral and LLama allow for commercial use with almost no restrictions or fees.

What is LangChain by the way

Given those premises, let’s try an example of prompt engineering using LangChain.

LangChain is a Python framework for easing the development of several LLM applications, including chatbots, summary tools, and pretty much any other tool based on language. The library brings together different parts that can be put together to form the so-called chains.

Those parts incluce:

Models: or better access points to different kinds of models

Prompts: Quick serialization, quick optimization, and quick management

Indexes: Text splitters, vector storage, and document loaders: these allow for quicker and more effective data access.

Chains: Call up sequences, going beyond a single LLM call.

Agents: Entities that make decisions about what to do by using LLMs. They take an action, watch what happens, and keep doing this until they finish the work at hand. Also this aspect goes beyond a single LLM query.

Numerous documents from a range of sources can be loaded into LangChain. The LangChain documentation contains a list of potential document loaders. Loaders for PDFs, S3 buckets, HTML sites, Notion, Google Drive, and many more are among them. This is why the library is so useful. It allows us to fine-tune or augment based on unstructured data.

Example in Colab

Moving into practice, here is an example in Python creating CapybaritoLLM, an LLM trained on contemporary agriculture and agritech issues in Sicily. As in most LLM, if we decide to use Mistral, we need to get the api-key from them first here.

In the following section I explain the steps to do a simple RAG query. Data are downloaded from one website and vectorize. Be careful that scraping content from the web is not always allowed and those lines are reported just for educational purpose.

One important aspect of the RAG technique is that we store our structured and unstructure data in vector representations, more specifically in vector database. Indeed we do not change the model, but we expand it with this database. One popular vector database is Chroma. In this example I provide some snippet of code which use a Google Colab environment and create temporarily this vectorization.

First we need to install the necessary libraries:

!pip install langchain

!pip install langchain_community

!pip install langchain_mistralai

!pip install pip install unstructured

!pip install faiss-gpuOnce installed we need to import them:

from langchain_community.document_loaders import TextLoader ,WebBaseLoader

from langchain_mistralai.chat_models import ChatMistralAI

from langchain_mistralai.embeddings import MistralAIEmbeddings

from langchain_community.vectorstores import FAISS

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.chains.combine_documents import create_stuff_documents_chain

from langchain_core.prompts import ChatPromptTemplate

from langchain.chains import create_retrieval_chainNow we can load different types of documents, be careful with webscrapting or using copyrighted material:

# Webscraping is not always a nice thing, this is only for educational purpose

url = "https://www.nationalgeographic.com/environment/article/italy-sicily-agriculture-tropical-climate"

# Create a WebBaseLoader object

loader = WebBaseLoader(url)

# Load the webpage content

document = loader.load()

# Access the extracted text content

docs = document[0].page_contentat this point we can proceed with splitting the data in uniform chunks and creating the embedding, instead of external vector database, I am using here the FAISS vector store from LangChain

# Split text into chunks

text_splitter = RecursiveCharacterTextSplitter()

documents = text_splitter.split_documents(docs)

api_key = "YOUR KEY AS DISCUSSED BEFORE"

# Define the embedding model

embeddings = MistralAIEmbeddings(model="mistral-embed", mistral_api_key=api_key)

# Create the vector store

vector = FAISS.from_documents(documents, embeddings)

Define a retriever interface:

# Define a retriever interface

retriever = vector.as_retriever()

# Define LLM

CapybaritoLLM = ChatMistralAI(mistral_api_key=api_key)

# Define prompt template

prompt = ChatPromptTemplate.from_template("""Answer the following question based only on the provided context:

<context>

{context}

</context>

Question: {input}""")Et voila! We can now ask a question which is supposed to not be prone to hallucinations or outdated:

document_chain = create_stuff_documents_chain(CapybaritoLLM, prompt)

retrieval_chain = create_retrieval_chain(retriever, document_chain)

response = retrieval_chain.invoke({"input": "What is the current situation with farmers in Sicily?"})

print(response["answer"])As Lewis et al. shown, this simple approach appears to outperform fine-tuning methodologies. This is just the beginning as there are different ways for indexing data, and just this might make a difference.

The critical aspects are now the used database and the performance of the vector database. Nevertheless, we are now endowed with a solution to inject specific knowledge updated into large language models, making them more helpful for our needs.